2026

BBANet: Bilateral biological auditory-inspired neural network for heart sound classification

Yang Tan, Haojie Zhang, Jingwen Xu, Hanhan Wu, Kun Qian, Bin Hu, Yoshiharu Yamamoto, Björn W. Schuller.

Engineering Applications of Artificial Intelligence, 2026.

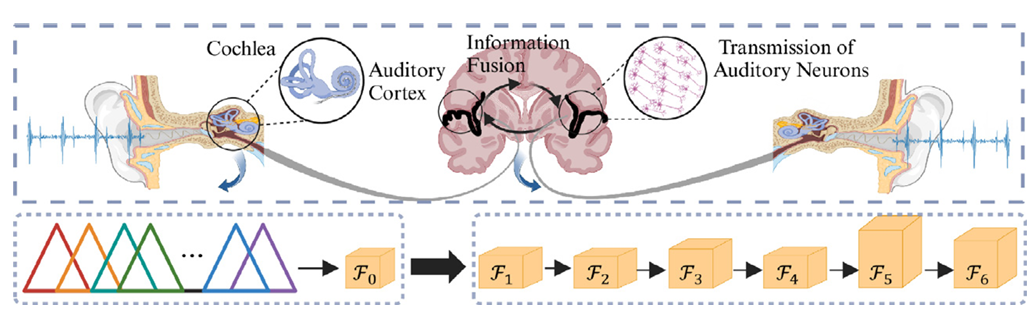

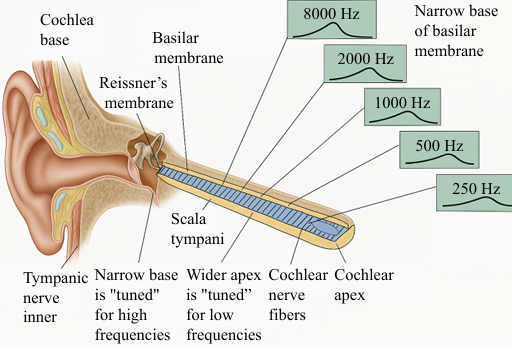

Abstract: Cardiovascular Diseases (CVDs) pose a significant global health challenge. Heart sound classification using computer audition technology holds promise for rapid early screening of CVDs, saving healthcare resources and reducing detection costs. However, there is a gap in artificial intelligence models for simulating expert auscultation based on biological hearing mechanisms. In this study, we develop a multi-frequency-channel-spatial-aware bilateral biological auditory-inspired neural network (BBANet) to fully exploit and integrate inter-layer and left–right information for the short heart sound cycles classification. The bilateral network involves a cochlea-like module and a multi-frequency-channel-spatial-aware attention module in the left and right pathways, and two information fusion modules between the layers. Experimental results show that the BBANet achieves a sensitivity of 92.71%, a specificity of 97.19%, and 96.22% of accuracy and 94.95% of Unweighted Average Recall (UAR, balanced accuracy). The proposed BBANet outperforms the reimplemented state-of-the-art models and the public classical models by 7.41% and 3.84% in average UAR, respectively, which demonstrates the classification potential of models based on biological auditory principles. More importantly, BBANet has the potential to reconstruct the structure of the auditory cortex based on biological auditory transmission and processing within and between the left and right auditory pathways. Therefore, this work helps to bring computer neural networks closer to the human biological auditory system, offering the possibility of reconstructing the auditory cortex from neural networks to better simulate and understand the complex computing mechanisms of the human auditory system. Code has been made available at https://github.com/tanyang89/BBANet.

2025

Can Information Representations Inspired by the Human Auditory Perception Benefit Computer Audition-based Disease Detection? An Interpretable Comparative Study

Zhihua Wang, Haojie Zhang, Yang Tan, Rui Wang, Kun Qian, Bin Hu, Yoshiharu Yamamoto, and Björn W. Schuller.

IEEE Journal of Biomedical and Health Informatics, 2025.

Abstract: Computer audition-based methods have attracted a great deal of attention in the field of disease detection due to their significant advantages, e.g., non-invasive and convenient operation. Among them, the introduction of information representations inspired by human auditory perception, e.g., Mel-frequency transformation, gives it great potential to approach and even exceed the limits of the human auditory system. However, according to previous research, it remains challenging to fairly assess whether information representations inspired by human auditory perception have a significant positive effect on disease detection. Moreover, performance differences among various information representations and their underlying causes are yet to be thoroughly investigated and analyzed. To this end, we propose an interpretable comparative study on information representations inspired by human auditory perception for disease detection. First, the detection accuracy of different information representations are investigated on two sound datasets (a psychological and a physiological disease) based on the classical model and the proposed Temporal-Spatial Multi-Scale Perception Network. Then, the noise robustness of these information representations are compared by introducing Gaussian noise with varying signal-to-noise ratios (SNRs). Finally, by combining the human auditory perception mechanism and explainable AI techniques, we analyze the reasons for performance differences among various information representations from qualitative and quantitative perspectives. Experimental results demonstrate that information representations inspired by human auditory perception can improve the performance of disease detection with statistical significance. Furthermore, Gammatone Frequency Cepstral Coefficients (GFCCs) outperform other information representations by achieving the highest accuracy, particularly under noisy conditions. The interpretable results further reveal the underlying reasons...